Ardent Performance Computing

Latest Post

PGConf.dev 2026 Trip Summary

I’m back home from Vancouver. What a great week – in every way. I’ll try to share a few highlights here.

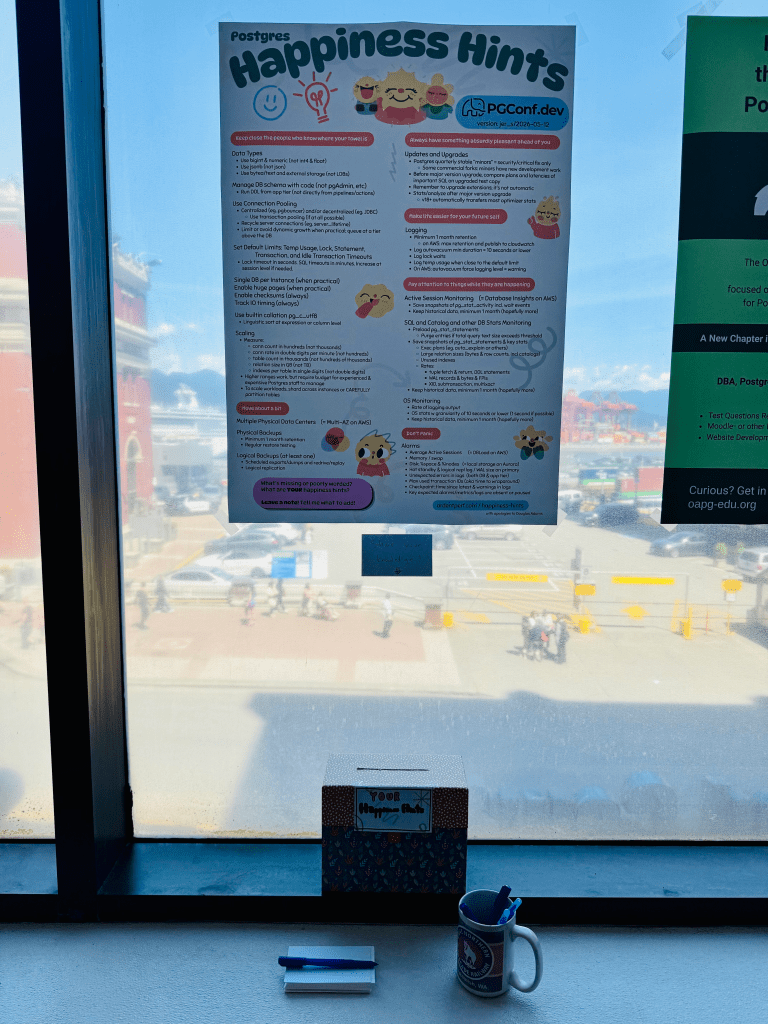

Updated Happiness Hints

First and foremost: after many years, the Happiness Hints have received a major update! Before the conference, I updated the hints based on all the feedback I’ve collected over the past few years. Then the hints were updated into a poster format and we printed it as part of the pgconf.dev poster session. Throughout the week, I continued collecting more feedback. I used a sharpie during the conference and marked up the poster with ideas. Special thanks to Laurenz Albe, David Rader, Sami Imseih, Ryan Booz and Nik Samokhvalov (Nik you weren’t at the conference but a happiness hint resulted from other discussions we’ve had). Of course I’m forgetting more people who gave feedback making the happiness hints better. After coming home from the conference, I incorporated all the notes I had – and the version that’s now published here at ardentperf.com is the latest & best version I’ve assembled so far.

Physical Replication and Postgres High Availability

An extraordinary number of postgres users rely on physical replication for high availability. It’s been around for a long time and it works well. Nonetheless, there are a few rough edges and over the years there have been various mailing list threads that haven’t fully been resolved.

I proposed a Friday unconference session on this topic, and the topic received enough votes to be selected. Notes from the unconference are available on the Postgres wiki. But the discussion extended far beyond the unconference; there were also hallway discussions over coffee (thanks Thomas Munro) and then continuing discussions over dinner at Joey Burrard and beers at Steamworks (thanks Ants Aasma).

The first question that everybody asks is “should postgres have more HA capabilities in core”? And a discussion starting along these lines consumed the first half of the unconference.

But I thought the most interesting train of thought was something more incremental – an idea that Postgres is missing a fundamental/overarching concept or first principle which could make a lot of problems easier to solve – a concept around cluster topology. There are a few ways this could look. A function or a view like pg_nodes or something? The ability on a hot standby to query for all of the replicas in the topology? How about a function that could be called on a hot standby when the primary is unreachable and return a list of potential candidates for promotion to be a new primary?

My own idea is to consider the set of nodes in synchronous_standby_names as the “cluster” or “herd” of instances. (Jeff didn’t like the name “herd” but the word “cluster” already means something else in postgres…) Maybe we can let people set the number to “0” if they want a cluster with async replication. Which brings us to another challenge – managing changes to this parameter. First, how do we know the exact moment when every single connection and session is aware of a new set of cluster members? Remember that individual connections are responsible to ensure transactions are replicated before acknowledging commits to clients. Second, how could we ensure that all of the replicas know about changes when adding or removing cluster members?

There are also challenges around logical replication slots (like losing them after two failovers in a row, or the inability to replicate them at all if decoding from standbys) – could a new cluster concept help? A new cluster concept also might help around managing backups of WAL across a cluster. Lots of interesting ideas!

Wait Events and Physical Reads and pg_stat_statements

A handful of short discussions with Sami Imseih and Lukas Fittl. First off, Sami has some patches for pg_stat_statements that I’m pretty excited about. Improving concurrency around the LWLock and looking for ways to optimize the situation with the query text file.

Continue reading

Recent Comments