Open Source communities are trying to quickly adapt to the present rapid advances in technology. I would like to propose some clarity around something that should be common sense.

Automated emails are spam. They always have been. Openclaw (and whatever new thing surfaces this summer) is no different.

Policies saying automated emails/messages are banned – including anything AI generated – are not only common-sense policies, they aren’t even a change from how we’ve always worked. This includes automated comments on github issues, automated PRs, automated patch submissions, and even any kind of automated review. Copilot automated reviews, snyk, etc – are ok if-and-only-if it’s configured by the owners of the repo/project. Common sense.

Enforcement of these policies – more than ever – depends on trust and relationships. I do think, for example, that non-native-english-speakers should be allowed to use AI to help them check their english. Used responsibly, AI tools can help a lot with language learning! Your grammar checker is probably based on some kind of LLM anyway. But I’m saying that a human always presses the “send” button on the message, and this human is responsible for the words they sent. If moderators suspect automated messages, every open source project should have a policy they can cite for blocking/banning the account.

Tomas Vondra’s article “the AI inversion” is the latest of many good and thought-provoking pieces I’ve read – it’s well worth the read – although he’s getting at deeper problems than what I’m writing about here – and he has very good reasons to have a much deeper level of concern for the impact of AI tooling on open source communities. These are interesting times and we don’t have all the answers yet.

A few more things I’ve recently read, which I think are good:

- CloudNativePG AI policy – https://github.com/cloudnative-pg/governance/blob/main/AI_POLICY.md

- Linux Foundation AI Policy – https://www.linuxfoundation.org/legal/generative-ai

- Bryan Cantrill, Oxide RFD – https://rfd.shared.oxide.computer/rfd/0576

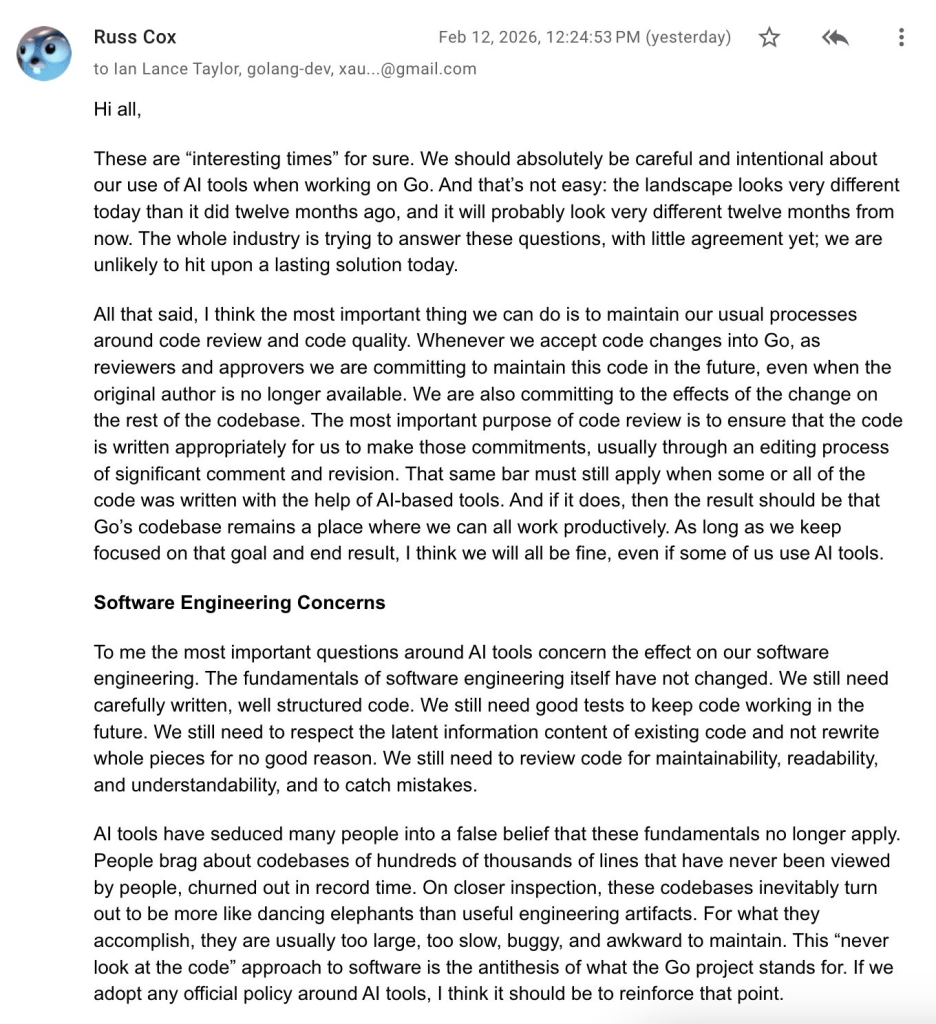

- Russ Cox on GoLang and AI – https://groups.google.com/g/golang-dev/c/4Li4Ovd_ehE/m/8L9s_jq4BAAJ?pli=1

- Jordan Tigani about AI @ MotherDuck – [long painful URL for LinkedIn post]

.

I’ve also been writing bits and pieces of partial thoughts over the past week or two – my short blog post about the Scott Shambaugh situation (And thank you to Kim Bruning for the thoughtful email exchanges about this blog! Please continue to keep this old guy on his toes, reasoning through things, and challenging his thinking!)

There have been a bunch of LinkedIn messages too; capturing them here:

- Mischa van den Burg wrote a LinkedIn post about whether ChatGPT in interviews is a red flag

- Brad Nicholson said “As someone that knows how to find that sort of info command line and has done so many, many times – I’d go to chat first, google second and the man pages last because the first two get me what I need faster than reading a man page.”

. - Replying to Brad:

“I do the same thing, but we also understand this is in descending order of hallucination likelihood

one of my favorite ways to use agents is to write me a script that demonstrates a behavior they claim… by the time the script is working, the claim is often significantly revised – and at present i still usually have to prevent them from making the test script work by moving the goalposts”

.

- Brad Nicholson said “As someone that knows how to find that sort of info command line and has done so many, many times – I’d go to chat first, google second and the man pages last because the first two get me what I need faster than reading a man page.”

- Replying to Phil Eaton’s post about Russ Cox’s perspective on golang project approach (policy?) for AI:

- Russ Cox’s message is here

- i said “yes – the section here is a good excerpt” (referring to Phil’s excellent choice of what to screenshot)

.

- Replying to Kelsey Hightower’s post “Generative AI is a slop generation machine by default. You have to put in a lot of work to get something of quality from it.”

- It’s the same work I did before, just shifted left. I’m iterating on low-level detailed design spec and autogenerating code, rather than iterating on the code and trying to keep design docs in sync. I think of it as writing more of my code in detailed prose, flowcharts, sequence diagrams, and pseudocode – rather than writing it directly in the programming language and manually keeping the design docs in sync. But it’s the same work, minus time spent on syntax (which was never where the value was).

. - Replying to Adam Jacob’s comment: I think it remains true that “you get out what you put in”

.

- It’s the same work I did before, just shifted left. I’m iterating on low-level detailed design spec and autogenerating code, rather than iterating on the code and trying to keep design docs in sync. I think of it as writing more of my code in detailed prose, flowcharts, sequence diagrams, and pseudocode – rather than writing it directly in the programming language and manually keeping the design docs in sync. But it’s the same work, minus time spent on syntax (which was never where the value was).

- Replying to Jordan Tigani’s post about MotherDuck AI policy:

- Tricky topic. I built a deeply detailed design for overhauling how auth works on a core platform…

* 291 prompts across 15 sessions over 3 days, comprising ~1,226 lines of prompt text

* final design document is 1,904 lines of markdown — a ratio of roughly 2 lines of human-written prompt for every 3 lines of design document output

a review of the full transcript showed a number of interesting characteristics of my prompts, including:

* Persistent effort to simplify the tool’s initial proposals

* Directly contributing critical domain knowledge

* Frequent insistence on precise terminology

Overall I’m satisfied and I think it’s a good doc that would have taken me 10x longer otherwise (especially research portions) – but I acknowledge mixed feelings.

one thing that’s clear: i obviously got the AI game backwards. i thought it was scored like golf, where a lower ratio of input-to-output is a better score

.

- Tricky topic. I built a deeply detailed design for overhauling how auth works on a core platform…

- My own LinkedIn post: “We need to re-think OSS contribution attribution in light of AI. More than ever, it’s important for committers to give credit on where the ideas are coming from. A committer can copy/paste someone else’s ideas into their own prompts, and they need to give appropriate credit.”

. - Mentioning the Oxide RFD in my reply to Daniel Gustafsson:

- my thought is around crediting someone who participates meaningfully in the discussion, even if they didn’t author the final patch. a wall of text email that nobody entirely reads is not a meaningful contribution – but there are lots of ways AI can be part of a well-written email. it’s hard to find the objective line though about what this means.

email moderation is going to get harder. trust and relationships were always important, and now even more so. i think using AI for research or to assist with writing is a net positive – as long as the final written product is concise and well-communicated and understood by the author. AI is the tool, but it’s finally still a human relationship. oxide’s RFD is good https://rfd.shared.oxide.computer/rfd/0576 – responsibility, rigor and empathy remain fundamental. old-school email lists might have a small advantage here. and i hope we can stay open to new people who seem interested to join and contribute

.

- my thought is around crediting someone who participates meaningfully in the discussion, even if they didn’t author the final patch. a wall of text email that nobody entirely reads is not a meaningful contribution – but there are lots of ways AI can be part of a well-written email. it’s hard to find the objective line though about what this means.

- Replying to Adam Jacob’s post: “If you’re thinking to yourself “this 10x increase in capability to create software doesn’t matter, because writing software was never the bottleneck”, you’re drawing the wrong conclusions from a true statement. … [skipping middle section, but go read the whole thing bc its good] … We will rebuild everything around this capability. Everything.”

- what people miss: it doesn’t need to be 10x more code, it can be same code 10x faster (which often is very little code — but it would have taken much longer to get it right)

but Adam why are you telling everyone? i’m having so much fun right now, and once everyone figures it out then we’ll be back to the usual drill…

.

- what people miss: it doesn’t need to be 10x more code, it can be same code 10x faster (which often is very little code — but it would have taken much longer to get it right)

- I wrote a LinkedIn post about how I think moderation will get harder, then clarified a bit in reply to Andreas Scherbaum by pointing to Tomas Vondra’s blog because that’s much better than what I said.

.

My experience in the Java community is that they love copying and pasting stuff that you wrote before ai and taking credit for it… And they wonder why I refuse to create a PR ahead of time and sometimes in general. Even the simplest thing with the simplest of change like oh I wanted to change a couple of words in a sentence in a 10 line PR… Where it was easier than asking me to make the change. I get that it was easier than asking me to make the change but not giving me new committer the commit is tacky at best and certainly doesn’t entice people. Now we’re concerning about taking the prompts? Yeah that’s never going to happen. Also do people not realize that at least in the United States AI output is not copyrightable… Only a human can generate copyrightable content.

LikeLike

Posted by xenoterracide | February 23, 2026, 10:32 am